Re: does flink support yarn/hdfs HA?

Posted by Till Rohrmann on

URL: http://deprecated-apache-flink-user-mailing-list-archive.369.s1.nabble.com/does-flink-support-yarn-hdfs-HA-tp16448p16453.html

URL: http://deprecated-apache-flink-user-mailing-list-archive.369.s1.nabble.com/does-flink-support-yarn-hdfs-HA-tp16448p16453.html

Hi,

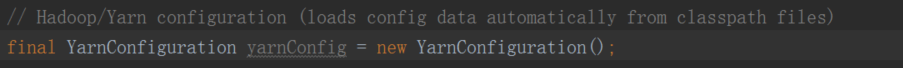

looking at the code, the YarnConfiguration only loads the core-site.xml and the yarn-site.xml and does not load the hdfs-site.xml. This seems to be on purpose and, thus, we should try to add it. I think the solution could be as easy as final YarnConfiguration yarnConfig = new YarnConfiguration(HadoopUtils.getHadoopConfiguration(flinkConfiguration));

Do you want to open a JIRA issue and a PR for this issue?

Cheers,

Till

On Fri, Oct 27, 2017 at 1:59 PM, 邓俊华 <[hidden email]> wrote:

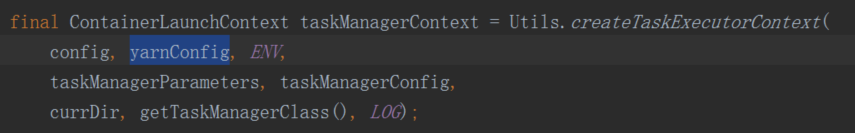

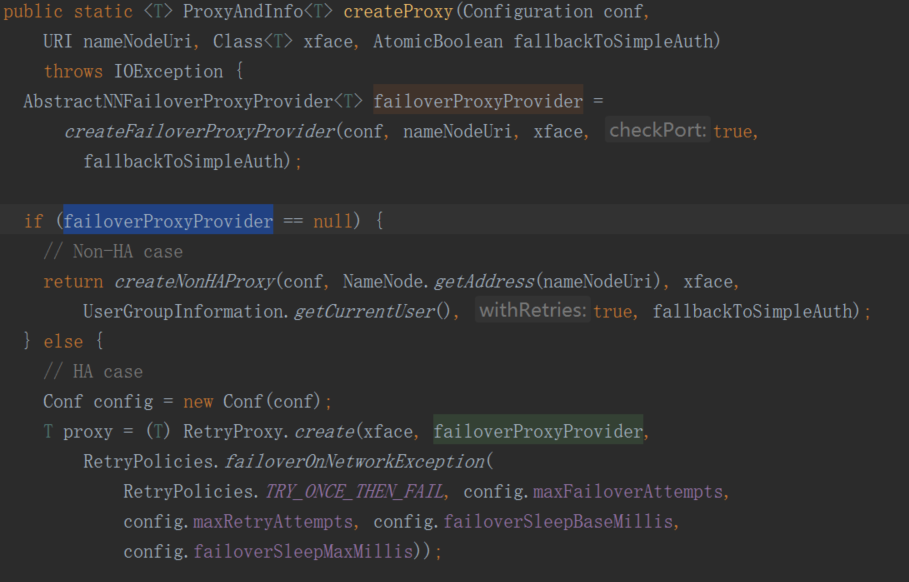

it pass the "final YarnConfigurationyarnConfig = new YarnConfiguration();" in https://github.com/apache/ flink/blob/master/flink-yarn/ not the conf in https://github.com/apache/src/main/java/org/apache/ flink/yarn/ YarnApplicationMasterRunner. java flink/blob/master/flink- ;filesystems/flink-hadoop-fs/ src/main/java/org/apache/ flink/runtime/util/ HadoopUtils.java See------------------------------------------------------------ ------ 发件人:Stephan Ewen <[hidden email]>发送时间:2017年10月27日(星期五) 19:50收件人:邓俊华 <[hidden email]>抄 送:user <[hidden email]>; Stephan Ewen <[hidden email]>; Till Rohrmann <[hidden email]>主 题:Re: does flink support yarn/hdfs HA?The it should probably work to apply a similar logic to the YarnConfiguration loading as to the Hadoop Filesystem configuration loading in https://github.com/apache/flink/blob/master/flink- filesystems/flink-hadoop-fs/ src/main/java/org/apache/ flink/runtime/util/ HadoopUtils.java On Fri, Oct 27, 2017 at 1:42 PM, 邓俊华 <[hidden email]> wrote:Yes, every yarn cluster node has *-site.xml and YARN_CONF_DIR and HADOOP_CONF_DIR be configured in every yarn cluster node.------------------------------------------------------------ ------ 发件人:Stephan Ewen <[hidden email]>发送时间:2017年10月27日(星期五) 19:38收件人:user <[hidden email]>抄 送:邓俊华 <[hidden email]>; Till Rohrmann <[hidden email]>主 题:Re: does flink support yarn/hdfs HA?Hi!The HDFS config resolution logic is in [1]. It should take the environment variable into account.The problem (bug) seems to be that in the Yarn Utils, the configuration used for Yarn (AppMaster) loads purely from classpath, not from the environment variables.And that classpath does not include the proper hdfs-site.xml.Now, there are two ways I could imagine fixing that:(1) When instantiating the Yarn Configuration, also add resources from the environment variable as in [1].(2) The client (which puts together all the resources that should be in the classpath of the processes running on Yarn needs to add hadoop these config files to the resources.Are the environment variables YARN_CONF_DIR and HADOOP_CONF_DIR typically defined on the nodes of a Yarn cluster?StephanOn Fri, Oct 27, 2017 at 12:38 PM, 邓俊华 <[hidden email]> wrote:hi,Now I run spark streming on yarn with yarn/HA. I want to migrate spark streaming to flink. But it can't work. It can't conncet to hdfs logical namenode url.It always throws exception as fllow. I tried to trace the flink code and I found there maybe something wrong when read the hadoop conf.As fllow, code the failoverProxyProvider is null , so it think it's a No-HA case. The YarnConfiguration didn't read hdfs-site.xml to get "dfs.client.failover.proxy.provider.startdt" value,only yarn-site.xml and core-site.xml be read. I have configured and export YARN_CONF_DIR and HADOOP_CONF_DIR in /etc/profile and I am sure there are *-site.xml in this diretory. I can also get the value in env.Can anybody provide me some advice?2017-10-25 21:02:14,721 DEBUGorg.apache.hadoop.hdfs. BlockReaderLocal - dfs.domain.socket. path =

2017-10-25 21:02:14,755 ERRORorg.apache.flink.yarn. YarnApplicationMasterRunner - YARN Application Master initialization failed

java.lang.IllegalArgumentException: java.net.UnknownHostException: startdt

at org.apache.hadoop.security.SecurityUtil. buildTokenService( SecurityUtil.java:378)

at org.apache.hadoop.hdfs.NameNodeProxies. createNonHAProxy( NameNodeProxies.java:310)

at org.apache.hadoop.hdfs.NameNodeProxies. createProxy(NameNodeProxies. java:176)

at org.apache.hadoop.hdfs.DFSClient.<init>( DFSClient.java:678)

at org.apache.hadoop.hdfs.DFSClient.<init>( DFSClient.java:619)

at org.apache.hadoop.hdfs.DistributedFileSystem. initialize( DistributedFileSystem.java: 149)

at org.apache.hadoop.fs.FileSystem. createFileSystem(FileSystem. java:2669)

at org.apache.hadoop.fs.FileSystem.access$200( FileSystem.java:94)

at org.apache.hadoop.fs.FileSystem$Cache. getInternal(FileSystem.java: 2703)

at org.apache.hadoop.fs.FileSystem$Cache.get( FileSystem.java:2685)

at org.apache.hadoop.fs.FileSystem.get(FileSystem. java:373)

at org.apache.hadoop.fs.Path.getFileSystem(Path. java:295)

at org.apache.flink.yarn.Utils. createTaskExecutorContext( Utils.java:385)

at org.apache.flink.yarn. YarnApplicationMasterRunner. runApplicationMaster( YarnApplicationMasterRunner. java:324)

at org.apache.flink.yarn. YarnApplicationMasterRunner$1. call( YarnApplicationMasterRunner. java:195)

at org.apache.flink.yarn. YarnApplicationMasterRunner$1. call( YarnApplicationMasterRunner. java:192)

at org.apache.flink.runtime.security. HadoopSecurityContext$1.run( HadoopSecurityContext.java:43)

at java.security.AccessController.doPrivileged( Native Method)

at javax.security.auth.Subject.doAs(Subject. java:415)

at org.apache.hadoop.security.UserGroupInformation. doAs(UserGroupInformation. java:<a href="tel:1698" target="_blank">1698)

at org.apache.flink.runtime.security. HadoopSecurityContext. runSecured( HadoopSecurityContext.java:40)

at org.apache.flink.yarn. YarnApplicationMasterRunner. run( YarnApplicationMasterRunner. java:192)

at org.apache.flink.yarn. YarnApplicationMasterRunner. main( YarnApplicationMasterRunner. java:116)

Caused by: java.net.UnknownHostException: startdt

... 23 more

| Free forum by Nabble | Edit this page |