Flink Kuberntes Libraries

|

Hi ,

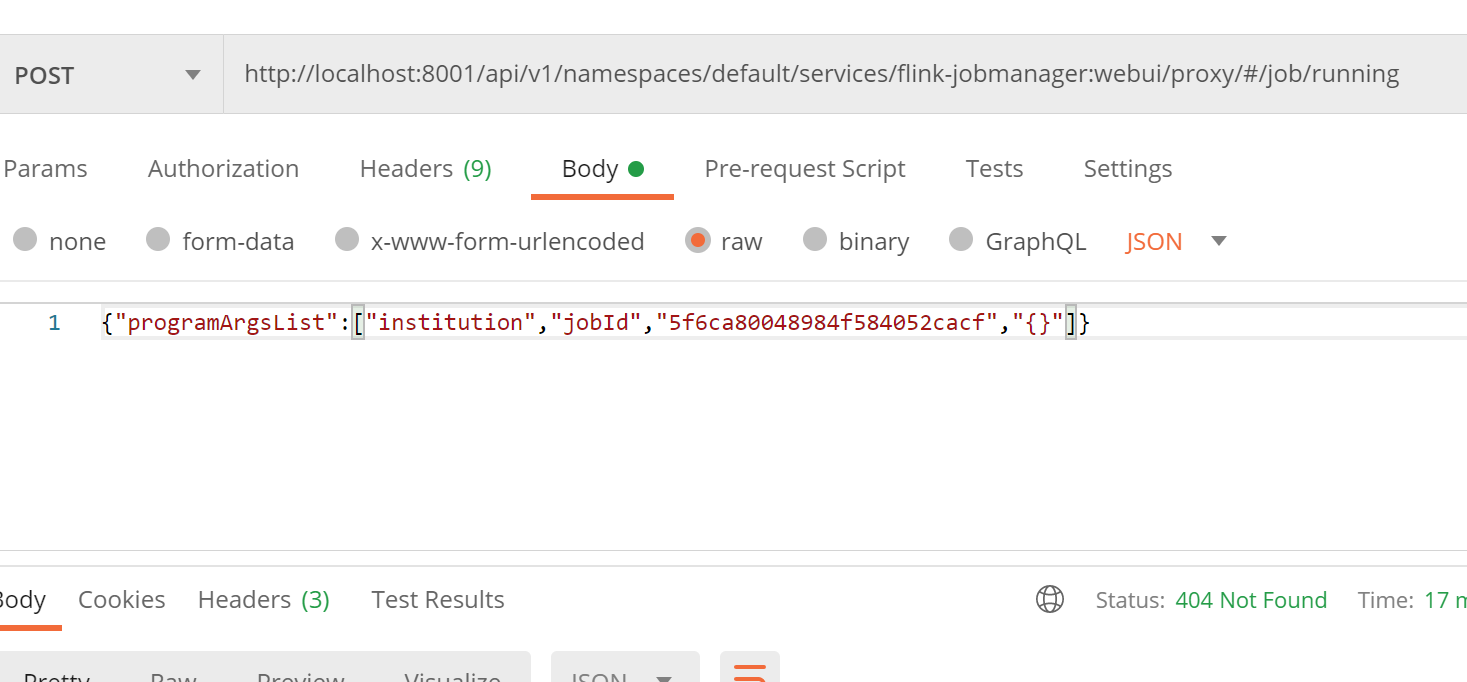

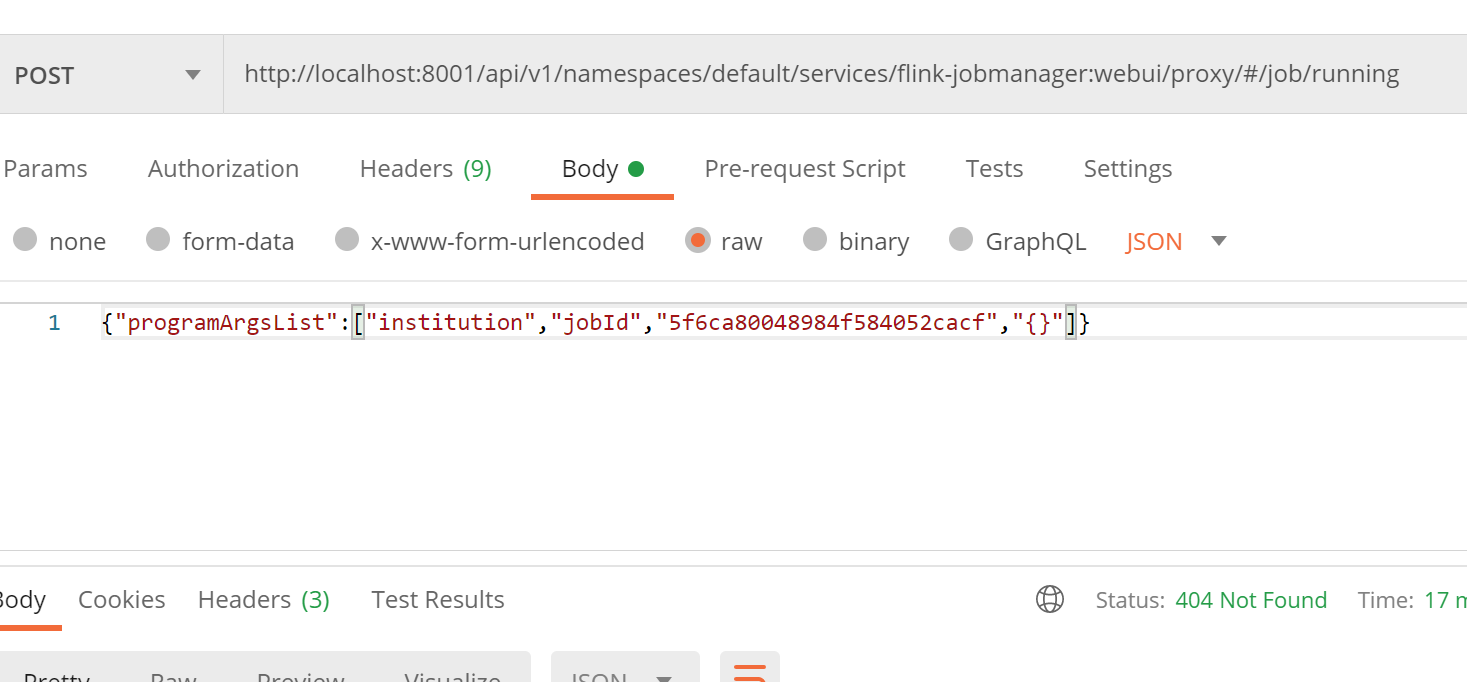

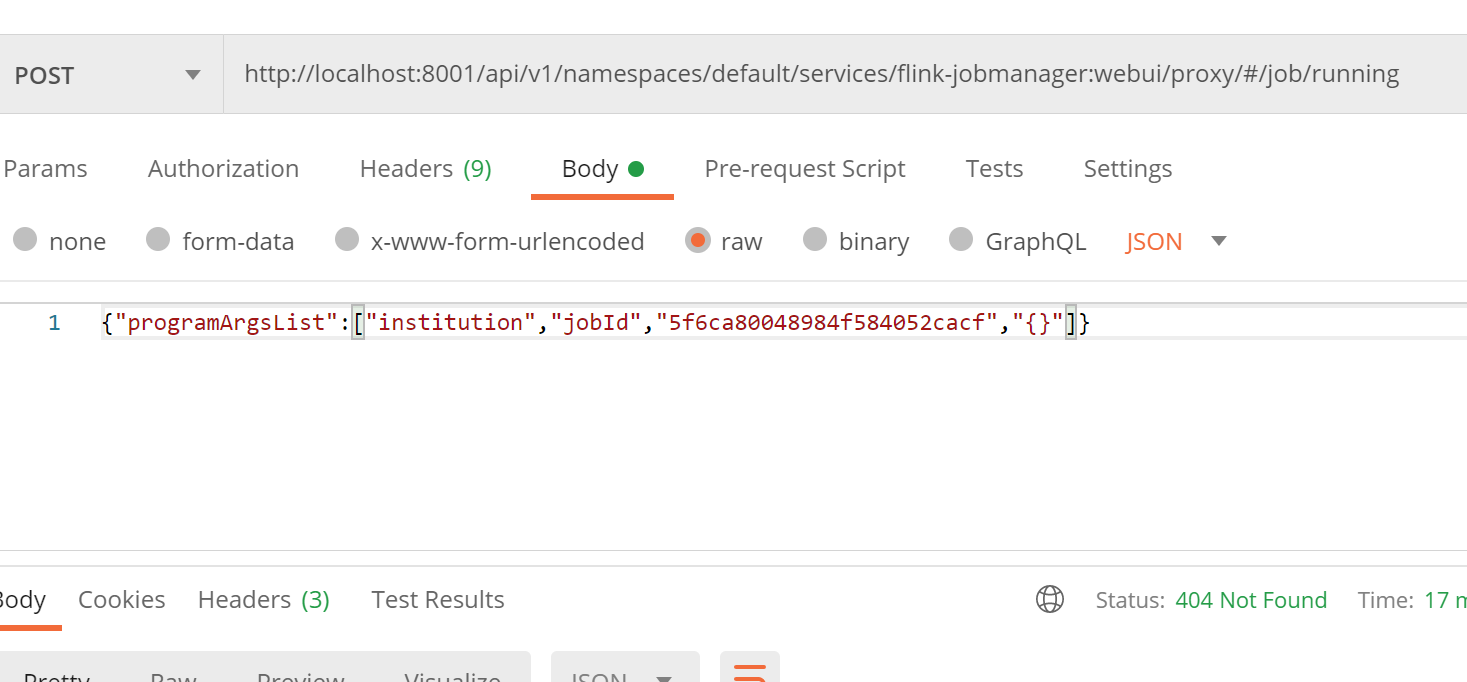

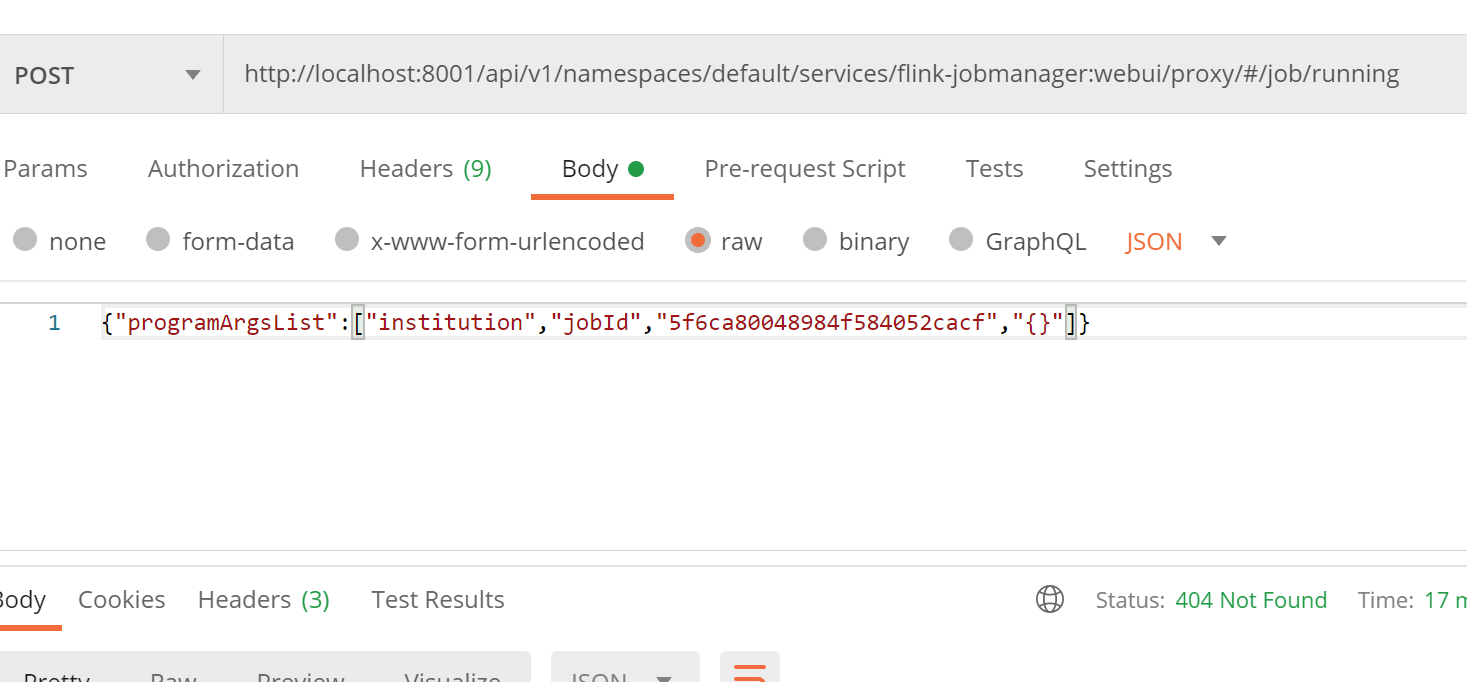

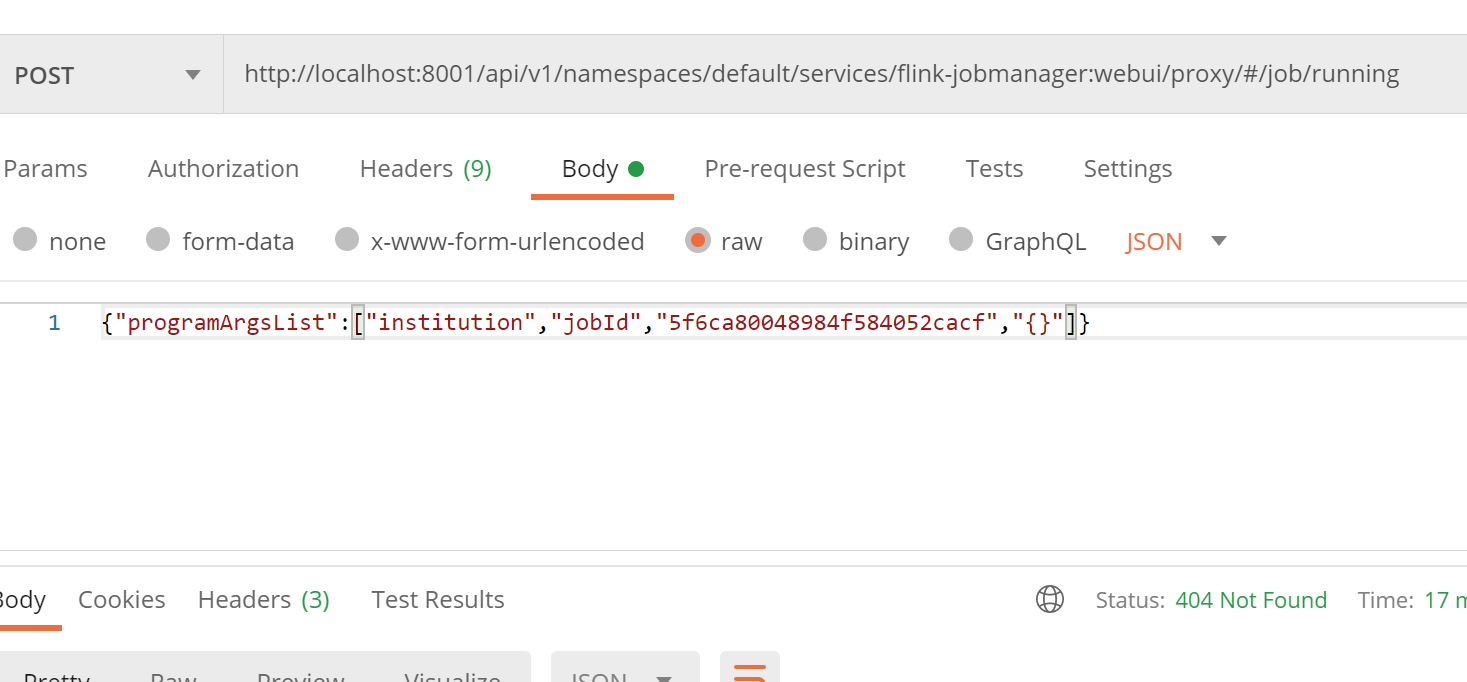

i have made some configuration using this link page :https://ci.apache.org/projects/flink/flink-docs-release-1.11/ops/deployment/kubernetes.html. and i am able to run flink on UI , but i need to submit a job using : http://localhost:8001/api/v1/namespaces/default/services/flink-jobmanager:webui/proxy/#/submit through POstman, and i have some libraries which in local i can add in libs folder but in this how can i add my libraries so that it works properly.  |

|

HI Saksham, the easiest approach would probably be to include the required libraries in your user code jar which you submit to the cluster. Using maven's shade plugin should help with this task. Alternatively, you could also create a custom Flink Docker image where you add the required libraries to the FLINK_HOME/libs directory. This would however mean that every job you submit to the Flink cluster would see these libraries in the system class path. Cheers, Till On Wed, Oct 7, 2020 at 2:08 PM saksham sapra <[hidden email]> wrote:

|

|

Hi Saksham, if you want to extend the Flink Docker image you can find here more details [1]. If you want to include the library in your user jar, then you have to add the library as a dependency to your pom.xml file and enable the shade plugin for building an uber jar [2]. Cheers, Till On Fri, Oct 9, 2020 at 3:22 PM saksham sapra <[hidden email]> wrote:

|

|

Hi Till, Could u tell me how to configure HDFS as statebackend when I deploy flink on k8s? I try to add the following to flink-conf.yaml state.backend: rocksdb state.checkpoints.dir: hdfs://slave2:8020/flink/checkpoints state.savepoints.dir: hdfs://slave2:8020/flink/savepoints state.backend.incremental: true And add flink-shaded-hadoop2-2.8.3-1.8.3.jar to /opt/flink/lib But It doesn’t work and I got this error logs Caused by: org.apache.flink.core.fs.UnsupportedFileSystemSchemeException: Could not find a file system implementation for scheme 'hdfs'. The scheme is not directly supported by Flink and no Hadoop file system to support this scheme could be loaded. For a full list of supported file systems, please seehttps://ci.apache.org/projects/flink/flink-docs-stable/ops/filesystems/. Caused by: org.apache.flink.core.fs.UnsupportedFileSystemSchemeException: Cannot support file system for 'hdfs' via Hadoop, because Hadoop is not in the classpath, or some classes are missing from the classpath Caused by: java.lang.NoClassDefFoundError: Could not initialize class org.apache.flink.runtime.util.HadoopUtils On 10/09/2020 22:13, [hidden email] wrote:

|

|

Hi Superainbower, could you share the complete logs with us? They contain which Flink version you are using and also the classpath you are starting the JVM with. Have you tried whether the same problem occurs with the latest Flink version? Cheers, Till On Mon, Oct 12, 2020 at 10:32 AM superainbower <[hidden email]> wrote:

|

«

Return to (DEPRECATED) Apache Flink User Mailing List archive.

|

1 view|%1 views

| Free forum by Nabble | Edit this page |